Migrate Microsoft-based IT environment to OSS

Preambel

This project is about migrating an IT environment mostly based on Microsoft products to open source software.

The goal is to become independent from closed software and non-European cloud providers.

Starting Point

My IT environment has grown over 25 years. I use it mostly for testing and learning - and my personal PC for gaming 😉

- Two physical x86 servers run Hyper-V Core with several virtual machines, which run Windows too - except two instances for Zabbix and a Linux based test machine.

- One Synology NAS as storage for daily backup.

- Two external HDD for monthly backup.

These are the services and products hosted/used in my environment:

- Microsoft Active Directory (with Azure Entra Connect)

- Microsoft Exchange

- Microsoft Windows as file server

- Microsoft Windows as build server (with 2x2 Azure "DevOps" Agents installed)

- Microsoft Windows to host WordPress in IIS (very ugly)

- Microsoft Windows with IIS as reverse proxy (even uglier!)

- Zabbix (appliance) for monitoring

- OneDrive as personal storage

- Microsoft Teams for video calls

- Microsoft Office

- Microsoft Visual Studio

I set myself some rules/requirements for the migration:

- Do the migration, as if it would be for a production-critical environment. So no down-times if possible.

- Do the migration in small steps. Since I do this migration in my spare time, I might need to pause but still need a working environment.

- Preserve the backward compatibility, no breaking changes.

But most important: have a long-term strategy.

From the beginning it was clear, that the future environment should be Linux based. OpenSUSE Leap is a conservative Linux distribution, which means, that they prefer stability over including the latest "hot" stuff. During the migration I used AlmaLinux and Fedora too.

I thought about using kubernetes but then decided to start with rootless podman and quadlet files for configuration. Kubernetes would have added much more complexity which would not give much advantages in the beginning. A migration from rootless podman to kubernetes should be possible later.

The Plan

- Replace the Hypervisors

- Migrate file server

- Setup the reverse proxy

- Setup the new monitoring system

- Migrate Active Directory and setup something similar for Linux

- Setup a broker/proxy to federate the Windows and Linux users

- Replace Exchange, Office and Teams

- Replace Azure DevOps with a self-hosted solution.

It should be possible to continue in the beginning working with Windows and then step by step move to Linux.

Hypervisor

For me the most obvious step was to replace Hypver-V first. I decided to use Proxmox. It is light-weight and has great cluster support.

Proxmox is an all-in-one product which comes with an easy installer to start from a USB-stick or any other boot media. After installation almost all further configuration can be done through the web interface. There is a CLI too.

The migration of VMs was quite easy, simply migrate server by server. In my case it was to move (squeeze) all VMs to one server and install Proxmox on the other one. Migrate all VMs to that new server and then install Proxmox on the other server.

Migrating a VM from Hyper-V to Proxmox is easy but might take some time:

- Shutdown the VM (yes, this might cause down-time if you're not using clusters)

- Use qemu-img (CLI tool) to convert each VHDX file into qcow2 format

- Copy the converted qcow2 files to the target Proxmox server and import them.

- Recreate the VM in Proxmox using the imported files.

- Start the VM.

- Install the VirtIO tools.

- Reboot VM and check settings, such as IP addresses etc.

I did this manually but I'm sure this can be automated using Powershell and SSH.

Goal:

- Replace Hyper-V with Proxmox

Monitoring

Zabbix is a great tool for monitoring. Primary use case is to monitor the entire IT infrastructure. For (virtual) machines you can install the Zabbix agent which monitors the machine it is installed on, but you can also use SNMP to monitor other devices such as printers, routers, etc.

Goal:

- Dedicated postgres database

- pgAdmin at port 8080/tcp

- Zabbix server at port 10051

- Zabbix web UI at port 9080/tcp

- Zabbix agent monitoring that host

- pgstack.network

- postgres.container

- postgres.env

- pgadmin.container

- pgadmin.env

- zabbix-server.container

- zabbix-server.env

- zabbix-web.container

- zabbix-web.env

- zabbix-agent.container

- zabbix-agent.env

Loading…

Loading…

Loading…

Loading…

Loading…

Loading…

Loading…

Loading…

Loading…

Loading…

Loading…

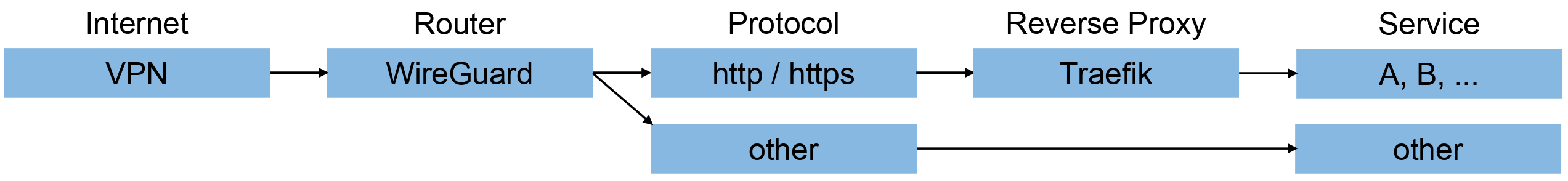

VPN + Reverse Proxy

I use OVPN as VPN provider together with WireGuard. It's a very nice and easy to maintain combination. There is no hard requirement to use a VPN. If you have a static IP and your router supports D-NAT, then you should have the same functionality as with VPN. If your router uses S-NAT, you will see your router IP on incoming connections instead of the client IP.

Traefik is a powerful reverse proxy. I made a setup to be able to configure external and internal traffic individually in one Traefik instance.

Goal:

- Small static instance configuration

- Separate dynamic individual service configurations

- Internal endpoints: 80/tcp and 443/tcp (mapped internally to 8080/tcp and 8443/tcp)

- External endpoints: 9080/tcp and 9443/tcp (my router forwards 80/tcp and 443/tcp to these ports)

Loading…

Loading…

Loading…

Global and shared configurations:

- disable-http2.yml

- force-insecure-transport.yml

- redirect-to-https.yml

- redirect-to-root-path.yml

- tls-certificate.yml

Loading…

Loading…

Loading…

Loading…

Loading…

Some services:

Loading…

Loading…

Loading…

Loading…

Loading…

Loading…

A bit more complex configuration for Exchange:

Loading…

Loading…

Loading…

Microsoft Entra / Active Directory

Migrating Active Directory is a critical step - always. Even small changes can have big effects.

The major alternative to Microsoft Active Directory is Samba Active Directory. Active Directory is nice to manage Windows users and the Windows OS. It works for Linux too, but the GPOs etc. do not have an effect at Linux.

For Linux, I found FreeIPA which does something similar as GPOs but a bit different. It primarily allows managing user accounts and hosts. Linux instances can join a FreeIPA domain and users can login, if they have the permission for that. You can also control which services a user is allowed to use and/or if the user is a super user or can obtain super user privileges.

Active Directory and FreeIPA can be configured to trust each other, the same way as you can configure two Active Directory domains to trust each other.

An important thing is, that both supports LDAP. This will be important for the identity provider later.

The procedure for migration is then quite straight forward:

- Install a Samba Active Directory Domain Controller (not available as container image).

- Join existing Active Directory domain (or install a new domain if you don't want to keep your existing users).

- Install FreeIPA and create a new domain (not available as container image).

- Configure a trust between the FreeIPA and the Samba AD domain.

In detail:

- I installed Samba AD DC on OpenSUSE Leap 16. Everything worked fine, except the Firewall configuration. I had to disable the firewall to continue.

- I joined the Samba AD DC to my existing domain and followed the official Microsoft procedure when migrating the AD roles from one DC to another one. I enforced a full replication, but you can also wait 24 hours (or maybe longer if your forest is very big) to let the DCs do that on their own. Then I degraded/de-promoted my Windows based DC to a regular domain computer and could safely decommission that VM. A very nice thing about Samba AD DC is, that it is nearly 100% compatible (as far as I tested) to Powershell commands and the usual RSAT applications under Windows, what makes the administration simple.

- I installed FreeIPA on Alma-Linux. (In 2025, there was no FreeIPA server for OpenSUSE Leap 16.) The installation was as simple as the Samba AD DC installation. Sometimes a bit different wording, but nothing complicated. Doing some test-joins with Linux clients lead directly to the issues in my the FreeIPA configuration and I could quickly solve them. As with Samba AD DC, the firewall was a problem and I had to disable it to continue.

- Setting up a trust between the Samba AD domain and the FreeIPA domain was a bit tricky. I might write a separate tutorial about that later. Sometimes the wording in the official documentation is misleading, especially when it comes to incoming trust and outgoing trust and to identify which side is meant in a situation. The documentation and tutorials in the internet jumps quite often between the both sides. Testing is quite simple. Try to obtain a kerberos ticket of the other side using kinit. If that works and you see your ticket with klist, everything works fine. Do not expect that you will see the users of the other side of the trust. That's not how it works.

- I tested to login with a user of the Samba AD domain on a Windows machine.

- I tested to login with a user of the FreeIPA domain on a Linux machine.

- I tested to use a Linux resource which is managed by my FreeIPA domain with a Windows user on a Windows machine.

With a setup like this, you can continue with your users in the Windows world and migrate them to FreeIPA over time.

From my point of view, Microsoft Entra is a trap. They want you to have your Active Directory in their cloud under their control. I've read so many times about to stop using on-prem Active Directory and to use Azure Entra only. I never had problems with Azure Entra Connect. It synchronizes my on-prem Users nicely my Azure Entra. The good thing of not having moved to Azure Entra is, that I was able to do this migration with Samba AD and FreeIPA without any problems. Azure Entra Connect still works fine, even though the Active Directory domain runs with Samba now. There is currently no Linux version of Azure Entra Connect, so I will have to continue with that Windows Server VM for now.

Why is Azure Entra required? Because I use(d) Azure DevOps and that requires a Microsoft Account. Later more on that.

Goal:

- VM with Samba AD DC and domain for Windows clients.

- VM with FreeIPA and domain for Linux clients.

- VM with shared DNS server (was required for my setup, maybe not relevant for you)

Identity Provider

Modern applications are not tightly coupled to something like Active Directory. Instead, they use OIDC (or SAML). Some require that you populate your users first, but most (in my case all except one) populate the user on demand.

A powerful product to work as an IDP broker is keycloak.

- Keycloak can act as an endpoint for OIDC/SAML clients

- Keycloak can federate LDAP and OIDC identity providers to work as the instance of truth regarding your users.

- Keycloak can handle multiple independend realms in one instance.

- Each keycloak realm can handle multiple applications ("clients").

- The login process flow can be configured if the default configuration does not fit.

The plan for my migration:

- Install keycloak.

- Create a dedicated realm.

- Add the Samba AD domain as LDAP source.

- Add the FreeIPA domain as LDAP source.

- Configure keycloak that users through OIDC/SAML look the same, no matter if they come from the Samba AD domain or the FreeIPA domain.

The idea behind unifying the users is, to allow moving a user between the domains but not breaking their identity. This is very important for smooth user migrations.

The LDAP integration in keycloak can be configured to filter users and groups. The user and group filter can be helpful to not expose all users or groups. You can also configure to read child groups of groups to preserve hierarchies in keycloak. This can be used to manage permissions based on group membership hierarchy.

Goal:

- Dedicated postgres database

- pgAdmin at port 8080/tcp

- Keycloak server at port 80/tcp and 443/tcp (mapped internally to 10080/tcp and 10443/tcp)

- Dedicated keycloak realm with federated Samba AD domain and FreeIPA domain

- pgstack.network

- postgres.container

- postgres.env

- pgadmin.container

- pgadmin.env

- keycloak.container

- keycloak.env

Loading…

Loading…

Loading…

Loading…

Loading…

Loading…

Loading…

Exchange and Outlook

Replacing Microsoft Exchange looked like the most difficult thing when starting to think about this migration. In the end, it was one of the simple parts. This has one good reason: grommunio.

Grommunio is an Exchange-like groupware which provided Exchange-compatible client APIs such as ActiveSync and auto-discover. This means, that you can migrate your mailboxes to grommunio and still use the same client applications. Don't get me wrong, it is not that grommunio becomes part of an exchange cluser, it replaces Exchange entirely!

The hardware requirements, especially on RAM are much lower than for Exchange.

But step by step:

- Install grommunio in a dedicated VM. (I installed the mailbox module only and skipped the other modules.)

- Use the installer assistant to configure your grommunio instance.

- You can use the LDAP integration to populate your users to grommunio. I added a cron job to use the grommunio CLI to perform a quick sync every minute and a full sync every 15 minutes.

- Use the admin web interface to configure and validate your DNS entries - this is maybe the most critical part. You can use the Microsoft Connectivity Analyzer to verify your setup. It works to that point, when it detects, that there is no Exchange server at the end, but a grommunio server. That is enough for us, because it proves that the configuration is ok.

- Migrate your mailboxes from Exchange to grommunio. I did this using Powershell for export and the grommunio CLI for import.

If you have a reverse proxy working with Microsoft Exchange, as described above, you might be able to change the target IPs in the reverse proxy configuration to your grommunio server instead of the Exchange server. It should work out of the box. I had to delete the Outlook profiles on my clients, but that was not a big deal due to autodiscover.

Goal:

- Replace Microsoft Exchange with grommunio

- Preserve Exchange compatibility for clients

Storage

There are several options available to replace a Windows file server with something Linux based. Instead of using a NAS directly, such as Synology NAS, I dediced to use TrueNAS in a VM.

TrueNAS is a very powerful platform. It works fine with joining a Windows or FreeIPA domain. File shares can be managed in a similar way as in Windows. But TrueNAS has a lot of options for replication, synchronization and backup.

The installation is easy:

- Install TrueNAS on a dedicated VM.

- Use the installer assistant to do the basic configuration such as IP address and hostname.

- Join your Samba AD domain or your FreeIPA domain.

- Use the web UI to configure the storage, file shares, backups etc.

I used OneDrive to backup my personal files. TrueNAS offers backups/synchronization jobs using rclone/rsync. You can configure that files shall be encrypted before upload and being decrypted after download, which means, that there is only encrypted data stored at your cloud storage. That is quite nice!

I ordered cloud storage at Hetzner and configured my TrueNAS server to sync my data there - always encrypted. If that synchronization fails, I get a notification from TrueNAS when it fails and another notification when it works again.

In my local network accessing the data is obvious: use the storage via SMB.

I will explain later, how to use the storage over the internet using a browser or smartphone.

Goal:

- Replace Windows file server with TrueNAS

- Configure encrypted backup to cloud storage

Office

A modern alternative to Microsoft Office should be usable online from everywhere, at anytime and in the browser. A powerful platform is Nextcloud which support two major Office integrations: Collabora and OpenOffice. Each of them has different advantages. Most important: both are compatible with Microsoft Office, so you can still use your Microsoft Office files.

Nextcloud is extendable with apps to get extra features or integrate third-party products.

Nextcloud integrates nicely with OIDC/SAML which means, that you are not restricted to a specific IDP.

You can create share-links similar to how you do it in OneDrive. Those links can have an expiry and/or can be revoked later.

You can also use Nextcloud to access files. Nextcloud can integrate several kinds of external (cloud) storages and let you access those in the same way as any other internal storage. This feature allows you to use any storage in your network from everywhere you have access to your Nextcloud instance without the need to migrate/relocate your data. Nextcloud supports SMB, NFS, S3, WebDAV and several other standardizes storage systems.

The Nextcloud app supports iOS and Android and synchronizes your files on those devices similar to OneDrive.

Nextcloud provides a container image called "Nextcloud AIO" - all in one. This setup works with a master container which then manages other containers for the individual services/apps. If you want to run this with rootless podman and active SELinux, you need to grant the master container access to the podman socket. I will write a separate guide regarding this another time.

Goal:

- Dedicated VM running Nextcloud AIO master container

- Integrate IDP such as Keycloak in Nextcloud

- Integrate external storages in Nextcloud

- nextcloud-aio-mastercontainer.container

- nextcloud-aio-mastercontainer.env

- nextcloud_aio_mastercontainer.volume

Loading…

Loading…

Loading…

Communication

One app that comes with Nextcloud is "Talk". It is an alternative to Microsoft Teams, but does not require to install an app on the device. It simply runs in the browser even on iOS and Android.

Meetings are called conversations and you can

- invite your contacts or guests outside of your organization.

- share screen(s), windows or areas of your screen, which is very helpful when you have a very big screen and the other participants don't have.

- share local sound.

- share files from any Nextcloud storage.

Nextcloud Talk supports

- confidential levels similar to Teams - if enabled in the Nextcloud administrator options.

- record meetings - if enabled in the Nextcloud administrator options.

- blurring or changing the background.

- SIP gateways to do regular phone calls.

Goal:

- Expose Nextcloud Talk via port 3478 tcp/udp

Software Development

I used Azure DevOps before this migration for most of my software development projects. Some projects were hosted at GitHub. Those platforms are convenient to use, but they are closed source. Some resources related to these platforms cost money. Example: one parallel build agent process in Azure DevOps is free for private projects. For public projects, you have unlimited parallel build agent process - as long as you host the agents yourself (checked in 2026). So why not host a similar platform entirely? If it is only your own projects, the extra load additionally to the build agents should be minimal.

Git is the tool used by the majority of developers for their source code repositories. Lets assume that the source code repository type shall always be git.

Many people use Gitea instead of GitHub. Both platforms are similar. There are forks/clones of Gitea, such as Forgejo.

Azure DevOps uses the term build pipelines to define, how to automatically build, test and deploy your projects. They are called workflows on GitHub-like platforms. Azure DevOps tasks are called (GitHub) actions. GitHub, Gitea and Forgejo are so similar, that most (GitHub) actions are compatible with all of those GitHub-like platforms. In practical: they are fully compatible, as long as the actions do not contain any GitHub specific stuff, such as GitHub URLs.

If you don't want to host a platform yourself, you can use platforms such as codeberg.org, which uses Forgejo under the hood.

I decided to host Forgejo myself.

Forgejo consists of two major parts

- Code repositories with web UI

- Hosts the source code, projects, issue tracker, wiki, etc.

- Forgejo runners - build agents

- Runs workflows

Every Forgejo component can be hosted using container images with podman. The runners require access to the podman docker socket. If you want to run this with rootless podman and active SELinux, you need to grant access to the podman socket. I will write a separate guide regarding this another time.

One important difference between Azure DevOps build agents and Forgejo runners is, that you do not have to install multiple runners to run workflows in parallel as you would have to do for Azure DevOps. When using Forgejo, the number of parallel jobs is simply a value in a config file. Depending on your hardware, you can have 2, 20, or more jobs running in parallel.

Maybe you like to access your git repositories through SSH. I disabled it in the Forgejo configuration to use https only.

Migrating a git repository with full history is done in three steps:

- Clone the repository with full history to your local machine. Full history means, that you download all commits, not only the latest version.

- Change the remote URL of your local repository to the new URL of the target repository.

- Push the repository to the server, which will now write your local copy to the target repository including all commits.

If you have a lot of repositories and a lot of projects, you can automate this with scripts.

Maybe there is a way to migrate the boards and backlogs from Azure DevOps to Gitea/Forgejo too.

The biggest part of the work was to rewrite the build pipelines as workflows. This was because I had every step defined in the build pipeline with Azure DevOps tasks. There are similar (GitHub) actions, but accordingly to my long-term plan, I want to avoid tight coupling. So I wrote major parts of the build pipeline as Cake script so that the workflow will run the Cake script. This has the advantage that I can run major parts of the build locally for testing. So my workflows installs the basic requirements for Cake and then runs the Cake script which does the build and test. Then the workflow takes over and uses the build artifacts and test reports for the next steps.

Goal:

- Dedicated postgres database

- pgAdmin at port 8080/tcp

- Expose Forgejo web UI via port 80/tcp and 443/tcp

- Dedicated VM for the major Forgejo components.

- Dedicated VM for the Forgejo runner(s).

- pgstack.network

- postgres.container

- postgres.env

- pgadmin.container

- pgadmin.env

- forgejo.container

- forgejo.env

- forgejo-runner.network

- forgejo-runner.container

- forgejo-runner.env

Loading…

Loading…

Loading…

Loading…

Loading…

Loading…

Loading…

Loading…

Loading…

Loading…

Conclusion

I did not mention all the little steps I had to do for the migration. I skipped the forth and backs and retries too. But from a high level view, that's it.

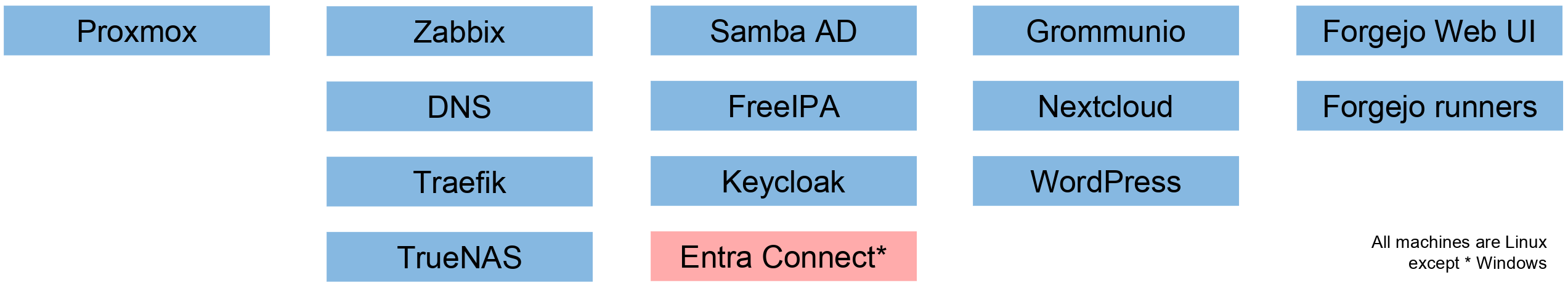

After a migration as described above, you should have something like this:

Hypervisor and VMs

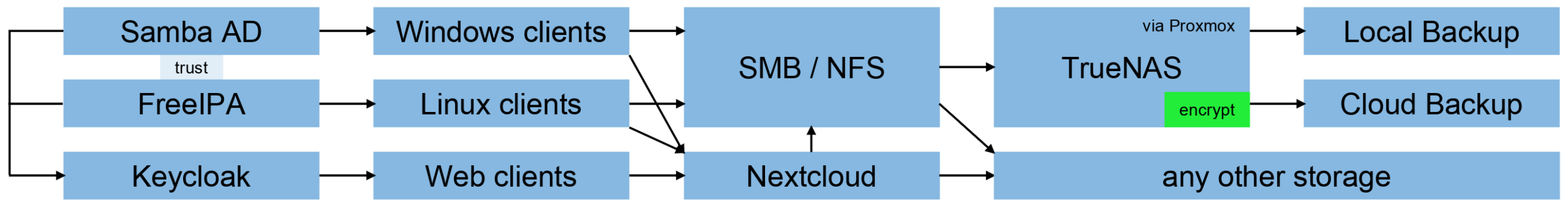

Clients, Identities and Storage

Network

Some Facts

It took some time to get there, but I can tell you some facts after running that environment for around four months:

- The total memory consumption on my two hypervisors has been around 90% to 95% in the past and went down to less than 50%. I got a lot of free RAM for other projects.

- The size of an entire backup of all VMs shrank from 1.4 TB to 0.4 TB and a backup takes less than two hours now instead of six hours before.

- The overall average CPU load on my servers is down to 3% now from 7% before.

- A reboot of the entire environment takes three minutes instead of 15 to 20 minutes. Especially Grommunio starts much quicker than Exchange and needs a lot less resources in general.

- Starting a Samba AD DC takes max. 15 seconds.

- The only Windows machine left in my network is used to synchronize some users of my Active Directory with Azure Entra. This VM can and will be deleted as soon as I have migrated the remaining projects from Azure DevOps to my local Forgejo instance.